This project was done during the end-of-study internship I carried out as part of my academic training at École Nationale d’Ingenieurs de Brest. It covers the activities I was assigned to during my time as an intern at Hoomano, a French startup building an AI agent for professional use cases.

This AI agent called Mojodex, works manually choosing the task you want to perform before starting to talk with Mojodex itself. But, what if you could just start talking to it, and Mojodex automatically guesses what you are trying to do among its possible tasks? That would be an interesting feature! It not only helps you go faster but also easily switch between tasks while you’re working on something else.

This feature is called ”routing” and the goal of this research was to first evaluate if it was possible to implement it using a Large Language Model and secondly, to choose the right type of LLM, open-source or private.

Besides doing the research, during my internship I also based my final studies project at Universidad Nacional de Cuyo on it.

I share my acquired experience and my whole research.

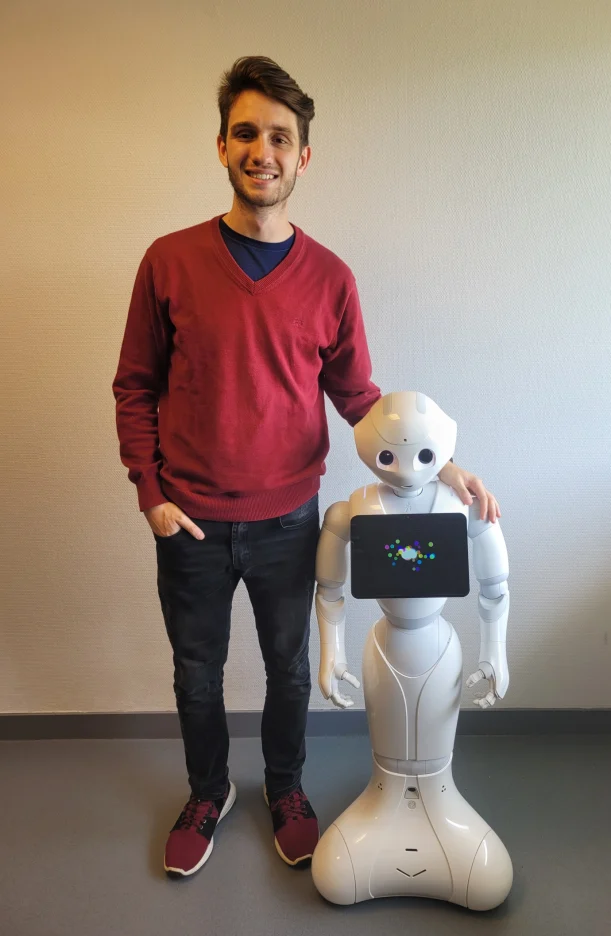

During my first year at École Nationale d’Ingenieurs de Brest in France, I had the chance to work on a thrilling project in the field of Natural Language Processing with my partner Trajano Mena. We worked as part of a team who was participating in a competition called RoboCup@HOME.

The goal was to make the robot capable of passing several challenges which involved computer vision, motion, and human language recognition. In particular, I worked with my partner in the language recognition system where we developed a program written in python that allowed the robot to distinguish when someone started to talk to him and then interpret the actual intention of the request to perform an appropriate action in response.

A tiny demo is shown in the recording above.

This project was part of the Free code camp Machine Learning course and my first approach to artificial intelligence.

The goal of this project was to develop an intelligent algorithm capable of adapting its game to beat different strategies in the classic game Rock, Paper, and Scissors. The project utilized four pre-existing strategies, or bots, which were named:

To beat these bots, I proposed a strategy that involves creating a four dimension NumPy matrix with 3 elements in each one. Each dimension represents the opponent's last four plays, and each element inside represents the three possible games: Rock, Paper, or Scissors.

The matrix keeps track of the frequency of each four sequence plays and increments the count of the respective sequence by one after each play. This approach enabled the algorithm to predict the most probable next move of the opponent based on the frequency of past moves.

The algorithm achieved a minimum of 60% success rate against each bot, which, while not optimal, is still good enough considering the simplicity of the approach.

This project was part of the Free code camp Machine Learning course, which aimed to introduce students to Convolutional Neural Networks (CNNs) . The challenge was to build a CNN capable of distinguishing between cat and dog images.

The image preprocessing was performed using TensorFlow methods, and the Neural Network architecture was built using Keras. The CNN model was trained using a dataset of cat and dog images given as part of the course’s resources.

In addition, the accuracy and loss evolution of the model during the training are displayed using Matplotlib. This allows to visualize how the accuracy and loss of the model changed over time as it processed more and more data.

At the end, the CNN model was able to achieve an accuracy of 70% in correctly classifying cat and dog images.

As a personal opinion, reaching the goal of this challenge was a significant achievement for me. As someone who was coding his first Neural Network, it required a great deal of research and effort to understand the underlying concepts and implement them in code. Nevertheless, it was a rewarding experience to see the model accurately distinguish between cat and dog images.

The challenge of this project, part of the Free code camp Machine Learning course, was to build a Book Recommendation Engine using the K-Nearest Neighbors algorithm. The dataset used for training the model was the Book-Crossings dataset which contains 1.1 million ratings (scale of 1-10) of 270,000 books by 90,000 users.

All the data were treated using Pandas and the Nearest Neighbors algorithm was implemented using Scikit-learn.

The algorithm was designed to suggest five books that are similar to the input book based on the user’s rating for it and the book’s author.

For instance, for the book:

The algorithm will return the following recommendations:

And the distance of each recommendation to the input book calculated by the KNN algorithm.

This project was part of a Machine Learning course where I built a Recurrent Neural Network (RNN) to classify SMS messages as either spam or ham (non-spam).

The dataset used for training the model was obtained from the Free code camp Machine Learning course resources.

The preprocessing of the text data involved first tokenization that was performed using the class Tokenizer of TensorFlow, and then padding of the sequences due to the different sizes of each SMS text passed to the model.

The model architecture was built with Keras and it consists of three layers:

Of all the projects in the course, I found this one the most interesting and challenging due to all the notions I had to learn in order to preprocess the text data.

Using a large data frame contained in the Free code camp Machine Learning course the goal was to use Multiple Linear Regression to know the health expenses of a patient knowing the following information:

The dataset used in this study initially included expense information for all patients. However, to build and evaluate the Multiple Linear Regression model using Keras, the dataset was split into training and testing datasets.

After being trained and evaluated, the final model was able to predict the health costs for a particular patient with a Mean Absolute Error of $3100.

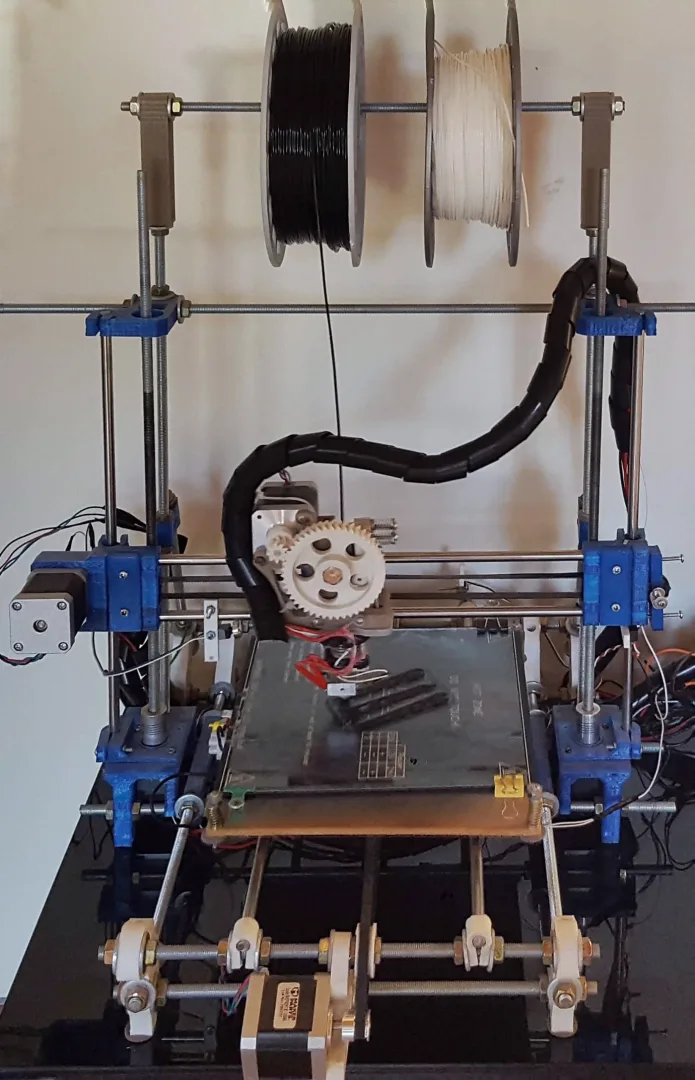

As a passionate enthusiast of both additive manufacturing and DIY projects, I took on the challenge of building my very own 3D printer.

I successfully built it thanks to the extensive resources and support of the RepRap open-source initiative.

It was an incredibly rewarding experience, providing me with hands-on learning opportunities and expanding my skill set and knowledge as an engineering student.

For instance, as part of this project, I took my first steps in using CAD software such as:

as well as slicing software like:

Currently, I primarily use Cura and Fusion 360 for my projects.

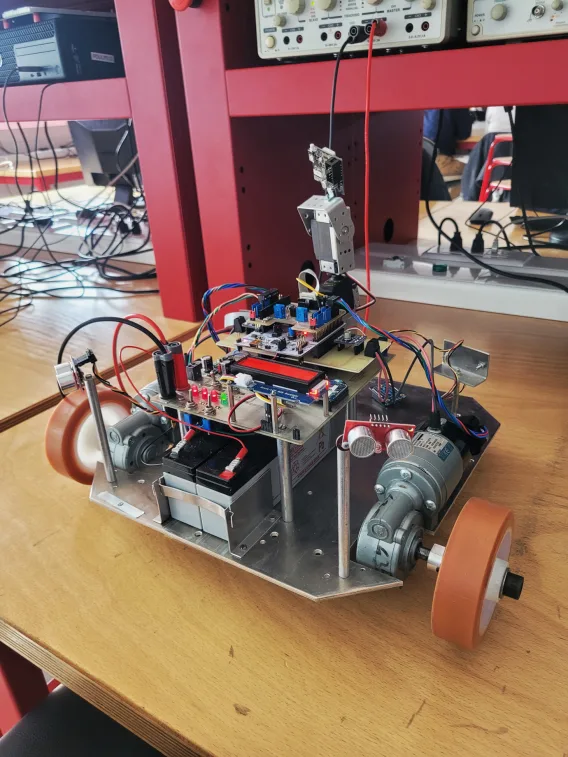

This project was part of the "Digital Embedded Systems" course at École Nationale d’Ingenieurs de Brest.

The objective was to install a real-time operating system (RTOS) to manage the mobile robot in the photo. We created multiple "tasks" to control specific robot features and the RTOS coordinated them to form a complete system, making real-time decisions on which task to run, pause, or stop.

The tasks included:

The "Interactive Applications Design" course at École Nationale d’Ingenieurs de Brest provided me with the opportunity to not only develop an Android mobile app, but also to acquire knowledge in image recognition through the project.

The app developed was a game inspired by the tv show “Dragon Ball Z”. Upon opening the game, the player is prompted to enter the number of Dragon Balls to be sought. Then, a search radius is requested to randomly determine the location of the Dragon Balls. Once these steps are completed, the game can begin.

As it is shown in the video, all the target locations are marked on the compass. The goal is to navigate to the marked location and tap the "Found ball" button, which will activate the camera and allow the player to scan and save the Dragon Ball (for simplicity, the app has been programmed to recognize oranges as the Dragon Balls).

The location feature was accomplished through the use of the smartphone's GPS and compass, while the object recognition was performed using a custom Object Detection Model, trained by me using Google Colab.

This experience provided me with valuable insights and hands-on learning opportunities in the following technologies:

I ultimately chose to employ a Tensorflow Lite model for optimal performance.